Overview

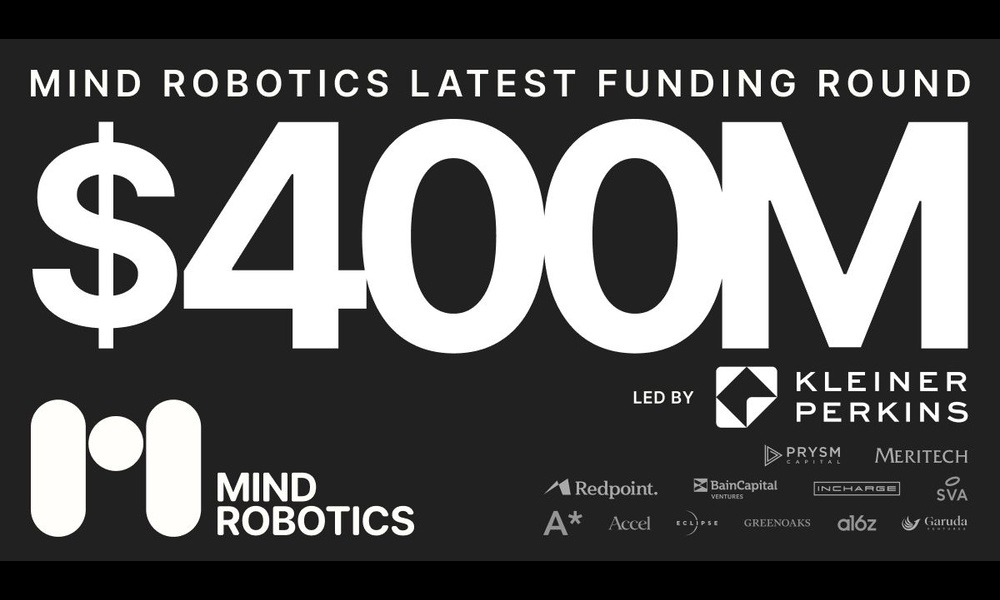

In a landmark move for industrial automation, Mind Robotics Inc.—a startup spun out of Rivian Automotive Inc. and founded by Rivian CEO RJ Scaringe—recently secured $400 million in funding to accelerate the deployment of AI-driven robots on factory floors. This infusion of capital underscores a critical shift: manufacturing lines are moving beyond repetitive, pre-programmed tasks toward machines that can handle the fiddly, judgment-based work that has traditionally required human dexterity and decision-making. This tutorial unpacks the key components of building and deploying such AI-powered robots, drawing on the strategies behind Mind Robotics' success. Whether you're an engineering lead, a manufacturing executive, or a robotics enthusiast, you'll walk away with a practical roadmap for integrating cognitive robotics into your own production environment.

Prerequisites

Before diving into implementation, ensure your team and infrastructure meet these baseline requirements:

- Understanding of Manufacturing Pain Points: Identify tasks that are tedious, error-prone, or require subtle judgment—like inserting flexible cables, inspecting painted surfaces, or assembling delicate components.

- Foundational AI/ML Knowledge: Familiarity with reinforcement learning, computer vision, and sensor fusion is helpful. For code examples, basic Python and TensorFlow/PyTorch experience are assumed.

- Robotics Hardware: Access to collaborative robots (cobots) or industrial arms with force sensing and vision systems. Mind Robotics' approach relies on precise hardware-software co-design.

- Investment & Partnerships: Like the $400M round, secure funding or strategic alliances for R&D and scaling. Even a pilot project requires a budget for compute, sensors, and integration.

- Regulatory & Safety Compliance: Understand ISO 10218 (robot safety) and ISO 13849 (control systems).

Step-by-Step Guide

Step 1: Identify High-Value, Judgment-Based Tasks

Start by auditing your production line. Look for operations that currently rely on human intuition—quality checks with ambiguous defects, wire harnessing, or part orientation adjustments. Mind Robotics specifically targets “fiddly” work that resists traditional automation. Map each task to a set of sensory inputs (visual, tactile, force) and decision criteria. For example, a connector insertion task might require perceiving slight misalignment and applying variable force. Document these as task primitives.

Step 2: Simulate and Train AI Models

Use a physics simulator (e.g., MuJoCo, Gazebo, or NVIDIA Isaac Sim) to create a digital twin of your factory cell. This is where you train reinforcement learning (RL) agents to master each primitive. Below is a simplified Python snippet using Stable Baselines3 for a pick-and-place task with force feedback:

import gym

from stable_baselines3 import PPO

from your_custom_env import CustomFactoryEnv # Define your own environment

env = CustomFactoryEnv(render_mode="human")

model = PPO("MlpPolicy", env, verbose=1, tensorboard_log="./logs/")

model.learn(total_timesteps=100000)

model.save("factory_ai_robot_v1")

Key details: The environment must include reward shaping that penalizes excessive force and rewards precision. Mind Robotics likely uses similar RL pipelines, fine-tuned with real-world data after simulation.

Step 3: Integrate AI with Existing Factory Systems

Deploy the trained model onto an edge device (e.g., NVIDIA Jetson or an industrial PC) connected to the robot controller. Use gRPC or ROS 2 for communication. Integrate with your MES (Manufacturing Execution System) to receive work orders and send status updates. The diagram below shows a typical architecture:

- Sensors → Cameras, force-torque sensors, tactile skins → feed real-time data to AI inference engine.

- AI Engine → Outputs joint positions, gripper force, and movement trajectories → sent to robot via URDF interface.

- MES → Receives completion signals, triggers next task.

Mind Robotics‘ $400M investment will likely scale this integration across multiple factories, using cloud-based model updates while maintaining low latency.

Step 4: Pilot, Validate, and Iterate

Run a controlled pilot on one production line. Monitor key metrics: cycle time, defect rate, and operator intervention frequency. Use A/B testing against human-only stations. Adjust the RL reward function based on real-world failures. For example, if the robot drops parts, add a penalty for velocity spikes. Mind Robotics’ approach emphasizes continuous learning—so set up a feedback loop where edge cases are logged and used for retraining.

Step 5: Scale and Sustain

Once validated, replicate the setup across other lines. This phase requires robust DevOps for AI models: versioning, rollback capabilities, and over-the-air updates. Invest in system monitoring dashboards (e.g., Grafana + Prometheus) for robot health and model drift. Mind Robotics' funding round directly supports this scaling, enabling multi-site deployments of its AI robots.

Common Mistakes to Avoid

- Over-reliance on Pre-Trained Models: Off-the-shelf computer vision or manipulation models rarely capture factory-specific nuances. Always fine-tune on your own task primitives and environmental conditions (lighting, part variance).

- Neglecting Safety: AI-driven robots can exhibit unpredictable behavior during early deployment. Implement collaborative safety zones, emergency stops, and lightweight force-limited robots until the model is well-validated.

- Ignoring Data Quality: Garbage in, garbage out. Ensure your simulation environment accurately mirrors real-world physics (friction, compliance, sensor noise). Mind Robotics likely invests heavily in high-fidelity simulation to avoid this pitfall.

- Underestimating Integration Complexity: Getting the AI to work is only half the battle. Connecting to existing PLCs, MES, and safety networks often requires custom drivers and extensive testing. Plan for 30% of your project timeline on integration alone.

- Lack of Human-AI Collaboration Design: Robots replacing humans entirely is a myth. Design for hybrid workflows where the AI handles the fiddly parts and humans supervise, troubleshoot, or handle exceptions. Mind Robotics’ success lies in augmenting, not replacing, factory workers.

Summary

This guide has walked you through the essential steps to deploy AI-powered industrial robots, inspired by the strategic moves of Mind Robotics and its recent $400M funding. From identifying judgment-based tasks to simulating RL models, integrating with factory systems, and avoiding common pitfalls, you now have a structured approach. The key takeaway: success hinges on a combination of high-quality data, iterative learning, and close human-robot collaboration. As Mind Robotics scales, the lessons here will become industry standard—start preparing your factory for the era of cognitive automation.